Question 4 of 267 from exam AZ-303: Microsoft Azure Architect Technologies

Question

HOTSPOT -

Your network contains an Active Directory domain named adatum.com and an Azure Active Directory (Azure AD) tenant named adatum.onmicrosoft.com.

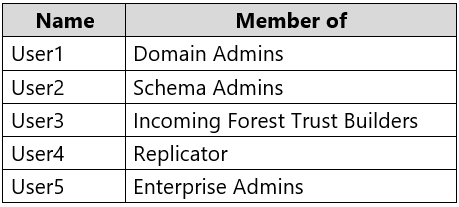

Adatum.com contains the user accounts in the following table.

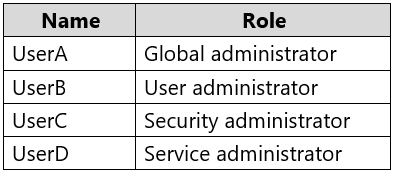

Adatum.onmicrosoft.com contains the user accounts in the following table.

You need to implement Azure AD Connect. The solution must follow the principle of least privilege.

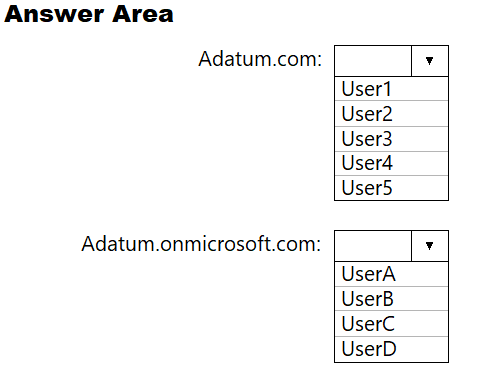

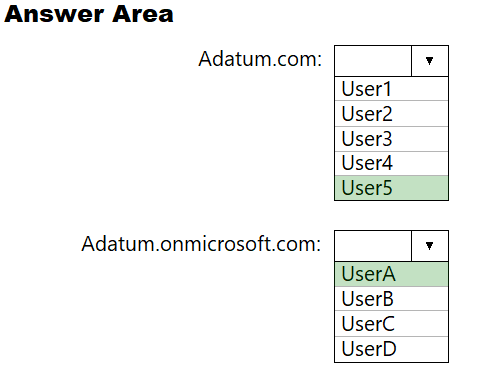

Which user accounts should you use in Adatum.com and Adatum.onmicrosoft.com to implement Azure AD Connect? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Hot Area:

Explanations

Box 1: User5 -

In Express settings, the installation wizard asks for the following:

AD DS Enterprise Administrator credentials

Azure AD Global Administrator credentials

The AD DS Enterprise Admin account is used to configure your on-premises Active Directory. These credentials are only used during the installation and are not used after the installation has completed. The Enterprise Admin, not the Domain Admin should make sure the permissions in Active Directory can be set in all domains.

Box 2: UserA -

Azure AD Global Admin credentials are only used during the installation and are not used after the installation has completed. It is used to create the Azure AD

Connector account used for synchronizing changes to Azure AD. The account also enables sync as a feature in Azure AD.

https://docs.microsoft.com/en-us/azure/active-directory/connect/active-directory-aadconnect-accounts-permissions